Product Description

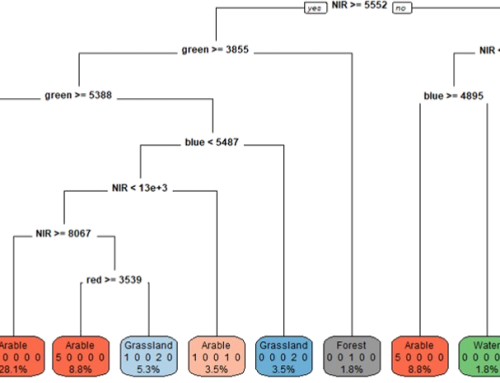

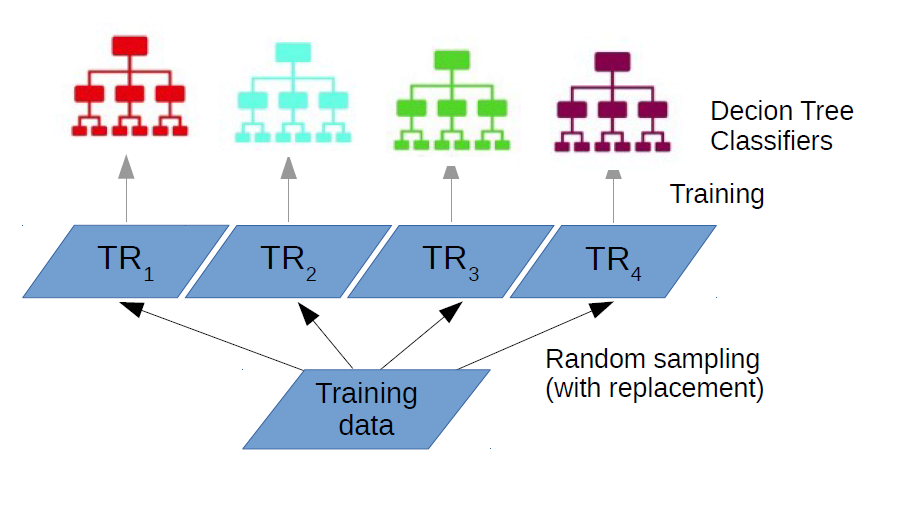

Random Forests (RF) is one of the most popular machine learning classifiers due to its capability to obtain good classification results with a relatively reduced number of training samples and because it relies on a reduced number of user-defined parameters.

In addition, RF can easily address the Hughes phenomenon due to the two randomness dimensions: first, it randomly selects samples to create a user-defined number of decision trees and, second, a randomly user-defined number of variables are used for splitting the decision tree nodes.

In this lecture, we will be introducing the concept of ensemble classifier by emphasizing the main methods used to build an ensemble: bootstrapping and bagging. We will explain how RF classifier is built and how the final decision is taken. The concept of out-of-the-bag will be explained in detail especially in connection with its role for internal evaluation of the RF accuracy. We will also explain how RF calculates the variable importance, a measure that can be used to discard the less relevant input variables.

In the end, special attention is given to the proximity measure used to identify either outliers or subclasses in the training samples.

Learning outcomes

Define the concept of ‘ensemble classifier’.

Explain the concepts of bagging and bootstrapping.

Describe how variable importance is calculated in Random Forest classifier.

Explain the role of proximity measure for removing outliers from the training sample set.

List the main advantages and disadvantages of the RF classifier.

BoK concepts

Links to concepts from the EO4GEO Body of Knowledge used in this course:

-

- > AM | Analytical Methods

- > AM10 | Data mining

- > AM10-2 | Data mining approaches

- > AM10 | Data mining

- > IP | Image processing and analysis

- > IP3 | Image understanding

- > IP3-4 | Image classification

- > IP3-4-5 | Decision trees

- > IP3-4-7 | Machine learning

- > IP3-4-9 | Sampling strategies

- > IP3-4 | Image classification

- > IP3 | Image understanding

- > AM | Analytical Methods

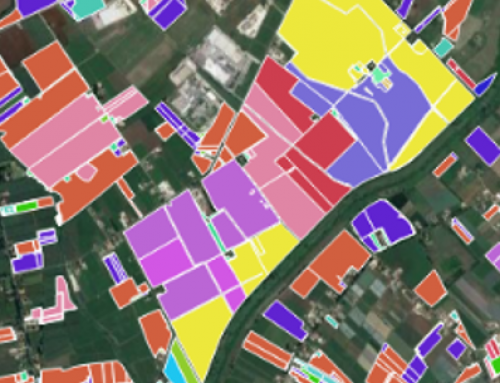

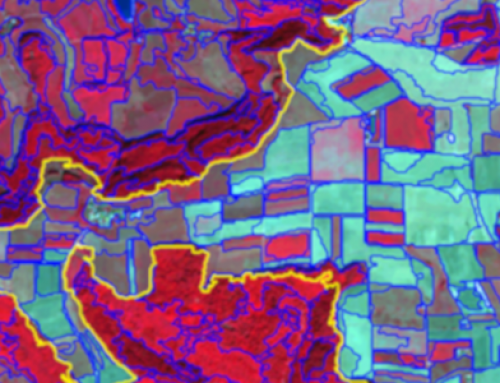

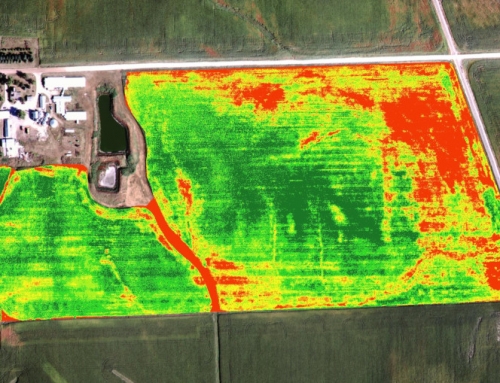

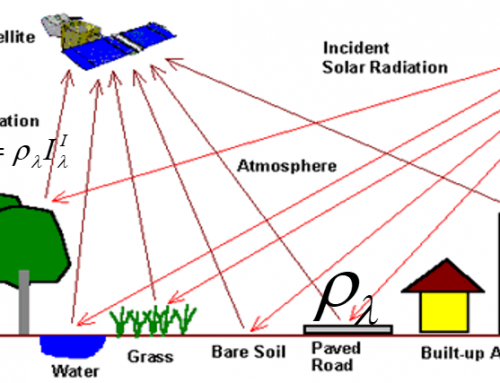

Material preview

Ownership

Designed and developed by: Mariana Belgiu, University of Twente.

Contributor: Anurag Kulshrestha.

License: Creative Commons Attribution-ShareAlike.

Education level

EQF 6 (what is this?)

Language

![]() English

English

Creation date

2020-07-27

Access

Find below a direct link to the HTML presentation.

Find below a link to the GitHub repository where you can download the presentation.